Documentation Index

Fetch the complete documentation index at: https://tyk.io/docs/llms.txt

Use this file to discover all available pages before exploring further.

Availability

| Edition | Deployment Type |

|---|---|

| Community & Enterprise | Self-Managed, Hybrid |

Use cases

- Centralized AI Access Control: Manage all your organization’s LLM connections (OpenAI, Anthropic, etc.) in one place, controlling which teams and applications can access specific models.

- Cost Management and Budgeting: Set monthly spending limits globally per LLM or per application to prevent unexpected AI costs and track usage against budgets.

- Data Privacy Enforcement: Assign privacy levels to LLMs to ensure sensitive data is only processed by approved, secure models, preventing data leaks to public LLMs.

LLM Provider

The LLM Management system acts as the core registry for all AI models accessible through Tyk AI Studio. It bridges the gap between external AI providers and internal consumers (Applications and Chat). Key components of an LLM entity include:- Basic Info: Name, descriptions, vendor, and active status.

- Connection: API credentials, endpoints, and the default model to use.

- Access & Security: Privacy scores, allowed model regex patterns, and namespace restrictions.

- Budgeting: Monthly spending limits and budget cycle start dates.

- Extensibility: Support for attaching plugins that execute in the AI Gateway during the request lifecycle.

Configuration

Administrators can configure connections to different LLM providers through the UI or API. The configuration includes:- Vendor Selection: Choose from supported vendors like

openai,anthropic,vertex(Google Vertex AI),google_ai,huggingface,ollama. - Authentication: Securely provide API keys or credentials.

- Model Restrictions: Use regex patterns (e.g.,

gpt-4.*) to whitelist specific models from a vendor. - Privacy Levels: Define how data is protected by controlling LLM access based on its sensitivity (0 lowest - 100 highest).

- Budget Control: Set a

MonthlyBudgetto limit total spending across all applications using this LLM configuration. - Plugins: Attach plugins that execute in the AI Gateway when a request flows through the REST endpoint.

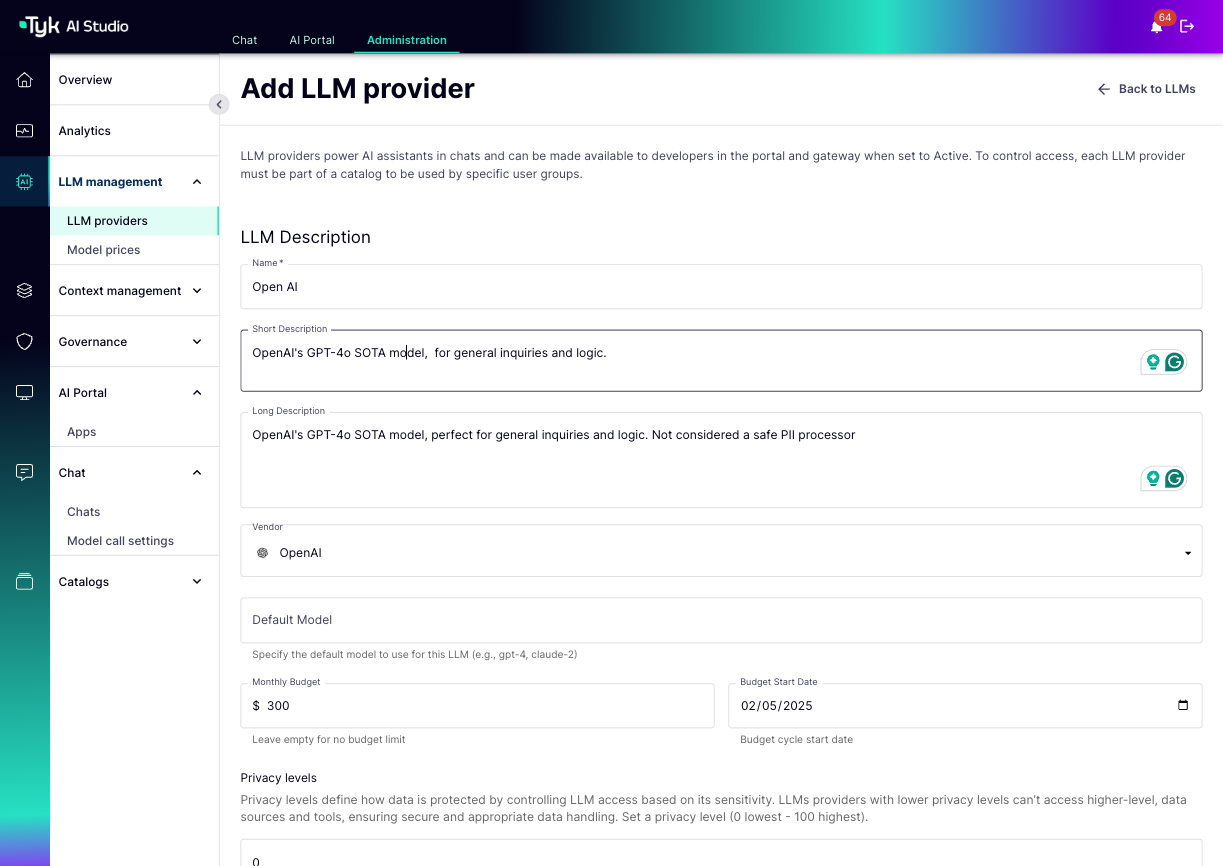

How to Create a LLM Provider

You can create and manage LLM providers through the Tyk AI Studio Admin UI.- Navigate: Go to the LLM providers section in the Admin UI. This view lists all configured LLMs, showing their Name, Short Description, Vendor, Privacy Level, and whether they are Proxied.

- Add New LLM: Click “Add LLM provider”.

-

Fill in the LLM Details:

- Name: A user-friendly name for this configuration (Required).

- Short/Long Description: Provide descriptions for the LLM.

- Vendor: Select the LLM vendor (e.g., OpenAI, Anthropic).

- Default Model: Specify the default model to use for this LLM (e.g.,

gpt-4,claude-2). - Monthly Budget: Set a monthly budget limit (leave empty for no limit) and a Budget Start Date.

- Privacy levels: Set a privacy level (0 lowest - 100 highest). LLMs with lower privacy levels can’t access higher-level data sources and tools.

- Allowed Models: Add regex patterns to whitelist specific models (e.g.,

gpt-4.*for all GPT-4 models). - In the Access Details section,

- API Endpoint: Provide the endpoint URL (required if enabling an LLM for the AI Gateway, even for providers with default URLs).

- API Key: Securely provide the necessary authentication credentials.

- In the Portal Display Information section,

- Logo URL: Configure the logo used in the Portal UI for end-users.

- Enabled in proxy: Needs to be set to active to use this LLM in proxy. For example, it is required to create an app that uses this LLM.

- Plugins: Select plugins to attach to this LLM. They execute in the order selected during the request lifecycle.

- Available Namespaces: Select which edge namespaces this configuration should be available to (leave empty for global availability).

-

Save: Save the configuration to make the LLM provider available.